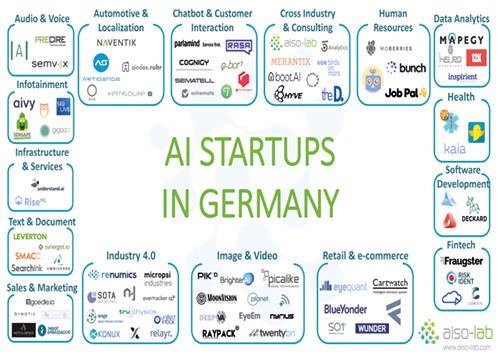

Europe has a growing and thriving Artificial Intelligence industry. Germany comes second as one of the most robust AI ecosystems in Europe with Berlin as the central AI hub supporting 30 AI companies and following the leading country the United Kingdom.

In this article, we are presenting you all the AI Start-ups in Germany based on their expertise and including a short introduction to each company. Moreover, since one picture is worth a thousand words, we have collected all the companies logo in one image available for you to download. Enjoy!

Audio & Voice

Aaron.ai uses Artificial Intelligence to help companies understand customer requests in natural language and handle them as desired.

PRECIRE Technologies has been developing the PRECIRE technology, which decodes written a speech and provides fascinating insights into the interaction of personality, expression, and behaviour.

SemVox offers efficient and secure solutions and technologies for voice control, multimodal human-machine interaction and intelligent virtual personal assistance systems, based on the latest AI technology and the outstandingly efficient ODP S3 software (Ontology-based Dialog Platform).

Automotive & Localisation

Aindex has built a location search engine to make it easy for you to find the apartment that suits you in the Ruhr area.

Artisense is a frontier-tech company that provides globally leading computer vision and artificial intelligence technology and data, which enable highly-scalable cost-effective Dynamic Global Mapping for GPS-independent autonomous navigation.

AVA combines big data, distributed computing, pattern recognition, and artificial intelligence to take the safety of individuals, organisations, businesses, cities, and countries to a whole new level.

The e4 qualification GmbH stands for comprehensive qualification and advice on the entire automotive process, from the first development steps to customer contact at the local dealer. e4 qualification GmbH offers a comprehensive portfolio for the expansion of competence of specialists and executives from the engineering and automotive sectors.

NAVENTIK was founded as a spin-off from University of Technologies Chemnitz. Naventik's mission is to provide high-performance vehicle localisation technology to mass market applications.

Terraloupe works at the innovative edge of technology together with its partners, to combine current research with real business applications.

Chatbot and Customer Interaction

Powered by natural language understanding and image recognition Chatshopper want to make fashion search conversational, easy and delightful by introducing the bot Emma which can send you product suggestions based on your shopping requests.

Cognigy provides enterprise-grade Natural Language Conversation technology, which is scalable, testable and highly customizable.

E-bot7 brings Artificial Intelligence to customer service and helps companies achieve higher customer service efficiency. The system analyses inbound messages and provides agents with accurate response suggestions.

Solvemate - Fredknows is a technology platform that automates leading companies’ customer support. Its virtual agents enable self-service support with near instantaneous solutions.

Parlamind is an Artificial Intelligence service company. Based on latest research in Natural Language Processing and Machine Learning and will analyse, route, and autonomously answer incoming customer communication, and thus works seamlessly together with its human counterparts as a member in the customer service team.

The open source Rasa Stack consists of machine learning libraries for language understanding (Rasa NLU) and dialogue management (Rasa Core). These are used by thousands of developers worldwide to build intelligent bots and assistants

Ryter is a no-code, AI-powered SaaS building platform to automate expert knowledge and to build interactive, scalable modules.

Semantelli's compliance sensitive, healthcare-specific social media and big data solutions help companies listen and engage in social media compliantly. Semantell solutions are also used by healthcare companies to manage adverse drug events reported by patients in social media, mobile apps and apps store comments.

Twyla helps you to automate your customer support conversations through chatbots that learn from the conversations between your agents and your customers.

Cross-Industry & Consulting

5Analytics is a start-up from the Stuttgart area. Founded in 2016, it helps innovative companies dealing with topics such as digitization or industry 4.0.

aiso-lab builds Artificial Intelligence solutions for enterprises. The available services range from introductory presentations and training courses to complete products.

Birds on mars ambition is the ability to execute, between tradition and transformation so it can bridge these gaps by connecting companies, teams and individuals with the growing opportunities of Artificial Intelligence and related technologies.

Boot. AI is a data science company specialised in Artificial Intelligence. By analysing large amounts of data, the aim is to help their customers make better decisions and streamline their internal processes.

City.AI goal is to make AI better by proactively tackling the issue of application of AI with the right people in cities across the globe. Local ambassadors are the backbone of CITY.AI and are in the lead of growing and advancing this vibrant community.

Develandoo is a software accelerator specialized in building early-stage ventures based on AI technology.

Explosion AI is a digital studio specialising in Artificial Intelligence and Natural Language Processing. It designs custom algorithms, applications and data assets.

Ewald and Rössing is a well-attuned, highly specialised company that supports and consults enterprises in and before acute crisis situations. Their aim is to protect the perception of the firms with political, societal, media and economic stakeholders.

Hyve’s aim is to design and develop ground-breaking product innovations, services and business models within an end-to-end process starting with customer insight generation in the fuzzy front end of innovation and ending with series production and market entry.

LIQUID NEWSROOM is a technology company with a strong consulting arm. Their focus is on artificial intelligence in consulting and in order to achieve that the company builds its own platform to analyse markets to decode market's DNA.

Luminovo is a deep learning company helping corporations develop tailored applications. It was founded by a team of AI experts from Stanford University with experience applying AI in the wild, having worked at Google, Amazon, Intel and McKinsey.

Merantix is a research lab and venture builder in the space of Artificial Intelligence. The unparalleled team of AI researchers and cross-project technology stack allows Merantix to test and incubate projects much faster.

Project A is the operational VC that provides its ventures with capital, an extensive network and exclusive access to a wide range of operational expertise.

Solandeo has been operating as a competitive metering point operator since 2013.S olandeo has a Germany-wide network of installation partners. This allows fast and flexible installation planning for meters, control systems or battery storage systems.

Soundreply was founded to train & coach humans on-the-job — working with complex machines & vehicles in mission-critical environments, both on the ground and in the air.

The D. is a full-service power-force company providing deep technological expertise for different industries within API development, strategic consultancy, and software development. It specialises in cybersecurity and Artificial Intelligence.

Unternehmertum offers founders and start-ups a complete service, from the initial idea all the way to IPO. A team of experienced entrepreneurs, scientists and managers support founders with the development of their products, services and business models.

Valculus supports the digital transformation of commercial processes, and provide tailored data solutions – based on advanced AI technology and Analytics

Headquartered in Munich, VUI.Agency provides companies in all industries with Voice User Interface Technology. VUI.Agency specialises both in development and strategy consulting for voice interaction design covering multiple platforms

Data Analytics

12k Research is a tech-driven research company which combines smart algorithms with a deep understanding of the tech landscape to help companies stay ahead.

Alexander Thamm founden in Munich in 2012, is the first company to specialize in analytics, data science and big data exclusively. The goal is to help clients with generating competitive advantages and added value by using big data.

Digital Helix develops a portfolio of start-up businesses in the digital health industry. They also consult the big players in the market who implement digital innovations.

Heurolabs focus is on Artificial Intelligence and machine learning. It makes these technologies available and accessible through a highly abstracted set of APIs, available for both private and public clouds.

Inspirient simplifies data analysis with Artificial Intelligence by automatically searching for insights into your business data. Data-driven decisions are now at everybody's fingertips.

mapegy is building the Innovation Graph powering smarter, faster, better R&D. The Big Data and Visual Analytics company provide a unique SaaS that helps innovators to map global innovation to connect the dots leading from technology to product.

QuantCo leverages expertise in data science, engineering, and economics to help organizations turn data into decisions. Headquartered in Boston, QuantCo has offices in Berlin, Tokyo, and Zurich.

With the current abundance of information and increasing data privacy concerns and regulations, the main goal of Statice is to facilitate complex and professional data analysis using privacy-preserving technologies.

Fintech

collectAI provides AI-based collection services to help its clients manage account receivables. Its sophisticated technology increases returns and improves the consumer experience by balancing between collection rates, costs and customer retention.

Fraugster is a German-Israeli anti-fraud company with the goal of eliminating fraud and increasing customers’ profits. To achieve this, they have invented an Artificial Intelligence technology that combines human-like accuracy with machine scalability.

MotionsCloud provides an intelligent claims solution for P&C insurers to streamline and automate claims processes. The solution reduces claims cycle time from days to hours, reduces claims expenses up to 75%, and improves the customer claims experience with mobile, artificial intelligence (AI image recognition), and video-enabled technologies which can integrate with legacy systems.

Risk Ident is a software development company based in Germany and the US that offers anti-fraud solutions to companies within the e-commerce, telecommunication and financial sector. Its key products are FRIDA Fraud Manager, DEVICE IDENT Device Fingerprinting and EVE Evaluation Engine. Uses cases include payment fraud, account takeovers, fraud within account and loan applications.

Health

With a passion for technology and expertise in healthcare, Cellmatiq creates artificial intelligence products that will benefit patients and medical professionals alike. Their mission is to support medical professionals in complex image analysis and apply artificial intelligence (AI) to automatically detect filigree anatomical structures

Cara by HiDoc Technologies empowers patients suffering from chronic digestive diseases to live a better life. Gohidoc takes digestive health beyond the pill, bringing together behavioural, microbial and nutritional data.

The healthcare.ai packages are designed to streamline healthcare machine learning. They do this by including functionality specific to healthcare, as well as simplifying the workflow of creating and deploying models.

Kaia health is a software-based healthcare platform. The company offers an app-based digital therapy focused on chronic pain that transforms the most effective offline treatments into digital therapies and connects these therapies to technologies that enhance the therapeutic effect.

xbird is a medical AI company dedicated to saving lives by disrupting the way we think about prevention and early detection of diseases. It is developing sophisticated machine learning algorithms that analyse sensor data from smartphones and wearables to predict and prevent critical health risks.

Human Resources

Bunch maps a team’s culture baseline, allowing companies to screen all candidates for team fit and predict their impact on culture and performance. The platform enables companies to create highly-aligned teams and shapes their culture to boost business growth.

Jobpal builds AI-powered chatbots that automate the communication between employers and candidates. For job seekers and candidates, chatbots offer a fundamentally new way to apply for a job — through their interface of choice, more intuitive and more engaging.

JobBot brings smart technology to hourly worker recruitment. They use automated bi-lingual chatbots and phone calls to engage job candidates fast, any time of day or night, then qualify them and book interviews.

MoBerries combines all hiring power of over 250+ companies and VCs to find the right candidate at the right time.

Image and Video

Brighter AI offers Visual Reconstruction as a service - based on state of the art deep learning - Brighter AI enhances images like removing fog and rain or unpixelating license plates and faces.

Based on the latest research approaches of Deep-Learning, DeepVA enables you to perform a profoundly and full examination of your company’s data by extracting hidden meta-information from videos & images and making it accessible to your company.

EyeEm is a photography company that builds computer vision technology to connect its global creative community with iconic brands. EyeEm’s collection features more than 90 million images from more than 20 million photographers around the globe.

Moonvision empowers customers with object tracking solutions within various industries using machine learning. Their solutions can be used in different environments to analyse video footage and trigger events.

Nyris finds products and objects in images and videos in less than a second. Its visual search engine aggregates best in class algorithms for an unparalleled matching speed and quality.

Working with artificial intelligence and image recognition Peat Technology helps farmers worldwide to protect their plants. With cutting-edge technologies, they support to secure any harvest as well as safe food production.

The product portfolio of Picalike fuelled by Artificial Intelligence boosts the performance of online shops and marketplaces. Displaying personalised, visually similar items in product recommendations improves relevance and increases conversion rates, especially in fashion and furniture related e-commerce.

pikd is an artificial intelligence company with a deep background in machine learning and video technology. It combines research with years of hands-on experience in developing automation solutions and state-of-the-art computing infrastructure.

Planet-AI is a science and R&D driven software company with deep roots in Artificial Intelligence, machine learning and neural networks. Planet-AI brings to market software toolkits (SDK) that are easily integrated into end-to-end partner applications or operating systems.

RAYPACK.Ai builds AI-based solutions for visual computing and offers products for face recognition, video analysis, object detection and pose recognition.

TwentyBN is a computer vision company that builds advanced machine learning systems that understand the video. They work with clients in industries like smart home or automotive to enhance the performance of video applications for activity recognition and human-machine interaction.

Industry 4.0

Actyx solves hard technical problems, constantly working toward a vision of smart, adaptive, sustainable and efficient manufacturing.

Arago’s solutions are built to automate enterprise IT and business operations. With their approach to intelligent IT and business operations automation, a machine with human problem-solving skills is taught by the experts.

Anacode is driven by a team of professionals in the fields of Deep Learning, NLP and international business. They develop analytics solutions which help companies to get an independent and unbiased understanding of their target markets.

Connective Elements is Robotic Process Automation company for innovation management, strategic business development. They offer improved cost efficiency through the more efficient use of resources (server, storage, network, etc.) and reduced license costs.

Cogista provides solutions for operations strategy & configuration, production, supply chain management, predictive maintenance and production/quality control. Cogista solutions cover strategic, tactical and execution operations challenges while their solutions enable a jump start and an industrial standardization.

Evertracker is a platform technology that gathers gapless real-time positioning data through connected devices, such as GPS, Bluetooth, RFID, or else. It analyses them in real-time, compares them to historical information and correlates them to existing sources, such as traffic or weather.

INNOPLEXUS is helping organisations move to continuous decision-making by generating broader, deeper and faster insights from structured and unstructured private and public data leveraging cutting-edge, patented Artificial Intelligence, Blockchain and advanced analytics technologies.

Integrana GmbH develops AI solutions and products on behalf of the customer. It advises the customer comprehensively on Machine Learning, AI, Data Science and Business Intelligence as well as supports the implementation.

Kahaura enables businesses to develop and implement AI solutions according to their requirements. They specialise in AI, IT-Consulting and Software-Development

KI-Group helps enterprises to build the business of future. KI group believes in cutting-edge technologies, and trusts in the power of motivated open-minded individuals with tech- and entrepreneurial mindsets

The KONUX technology uses Artificial Intelligence to help clients to monitor their infrastructure continuously and to improve their operations. The end-to-end sensor data solution empowers industrial and rail companies to reach a new level of asset performance.

micropsi industries use reinforcement learning to generate dynamic, sensor-driven behaviour for industrial robots. micropsi sectors add a third option for behaviour that needs to adapt dynamically to the environment, driven by camera images or force sensors.

Neokami's core platform has been optimised to solve a focused set of today’s pressing data security problems. The product enables companies to discover, classify and help secure sensitive data in the cloud, on-premise, or across their physical assets.

The n-join system immediately begins listening to all of a factory's machine-to-machine communications, analysing that data in real time, and providing robust, customised tools and insights to plant engineers and managers alike.

At Renumics the proprietary machine learning techniques enable their customers to automate their CAE workflows easily and to access them from simple, easy-to-use interfaces.

Sota solutions let visions and technological lead become true. It uses the company’s flood of data to simulate, shape and improve their most important processes.

Tngtech supports its customers with state-of-the-art tools and innovative ideas. Their mission is to analyze and solve the strategic or routine IT problems which customers face.

True physics aim is to support processes which are highly dependent on data generated at runtime or which have a high proportion of mechanical interaction between workpieces, tools and robots.

ThingsTHINKING differs from known approaches in NLP/NLU since it analyzes the meaning of concepts (aka semantics) of natural language. Therefore thingsTHINKING runs comprehensive and precise analyses, not unlike a human agent.

Theum synthesises complex pools of documents into powerful, virtual subject-matter experts that use AI to deliver clear, complete, ready-to-use answers to every question.

Unified Inbox Pte Ltd. develops and operates an intelligent Internet of Things (IoT) messaging platform called Unification Engine (UE). UE is a messaging platform that enables products and software to communicate with people and things and delivers solutions for smart homes, cities, and enterprises.

Xamla focuses on adaptive robotics using AI processes. The goal is - together with the worldwide robotics community - to ring in the era of Personal-Robots. Robots are taught to see, feel, and understand the composition of a scene, and enable the programming of intelligent robot behaviour

Infotainment

149 Technologies focus is to combine the proprietary context engine with machine learning technology, so it makes your calendar a bit more helpful every day - allowing to accomplish tasks faster and to access information relevant to different events quickly, all in a single place.

Aivy aim is to gain a deeper understanding of the intrinsic motivation behind the user’s actions by finding patterns in factors that influence our everyday lives and determine actions.

gigaaa is a personal assistant as well as a chat messenger. The mission of gigaaa is to make organising your free time and related activities as easy as possible.

Sensape develops an artificial perception of infotainment systems. They offer the technology to interact with shopping windows or shop applications.

Tawny is a dedicated company to the fascinating breakthrough-technology of Emotion AI. Because today, machines, robots and intelligent agents have an emotional quotient (EQ) of zero. The company aims to change this shortly in a world where smart things are adapting to human emotions to be more efficient, comfortable, healthy and safe.

Infrastructure

RiseML adds support for Machine Learning workloads and frameworks like TensorFlow. With RiseML, you can easily track experiments, run distributed learning experiments across multiple nodes, and perform hyperparameter optimization.

Understand.ai provides high-quality image annotation for autonomous driving, satellite imagery and medicine.

Retail and e-commerce

Blue Yonder combines brilliant software engineering with world-class data science and a unique cloud-based platform for predictive applications. With its numerous projects, they offer customers predictive analytics solutions for optimal decision-making – automated and in real time.

Cartwatch is a start-up company which develops solutions for article surveillance and anti-theft protection based on the latest technology in Computer Vision and Deep-Learning.

EyeQuant’s mission is simple: they help companies design websites that stand the test of attention and help users find what they are looking for immediately. EyeQuant-optimised websites evidently communicate benefits quicker, provide a much better user experience and yield significantly higher conversion rates.

Fedger uses advanced algorithms and machine intelligence to collect, structure, enrich and contextualise web data on demand and make it accessible via a simple to use micro apis in near-time.

Experts of SO1 have built a cross-retailer promotion platform based on cutting-edge algorithms and seamless (offline) retail integration. On this platform, both brands and retailers can target consumers indeed individually and in real-time.

Wunderai is building real-time cognitive AI applications to help brands to get nearer to the customers and grow through higher marketing effectiveness.

Sales and Marketing

Alexjacobi is an AI company which builds algorithms to use data as the centrepiece of creative ideas, not to justify them. And it uses them to create audiovisual communication that is relevant, respectful and authentic to every human.

The Adtelligence Personalization Cloud, a SaaS solution, optimizes the user experience of e-commerce shops, mobile sites and online portals by leveraging big data and machine learning.

Based on Machine Learning, goedle.io's B2B service predicts user churn in freemium apps and utilizes this information to retain users by means of re-targeting

Inbot helps innovative B2B businesses to get warm introductions to potential customers globally. It supports to build trust, provide social proof, and rapidly grow businesses B2B sales globally.

Rapid Market-Research based on Neuro-Science and Big-Data. Neuroflash focus is on testing visual and verbal marketing material in the target audience and provide reliable results within days.

Qymatix Solutions specialises in the field of predictive sales analytics for B2B using Artificial Intelligence. Qymatix develops and commercialises a SaaS Solution that enables sales leaders in medium-sized business-to-business enterprises to achieve a much higher business success rate through better marketing decisions.

The Saas.co mission is to make sales easy with LISA, an Artificial Intelligence assistant for sales and customer care that reads, understands, categorises and drafts emails.

Software Development

Acellere has developed an intelligent software analytics platform called Gamma, as a part of their mission to start a global movement for clean code.

Deckard Intelligence is the AI engine that learns about the structure and performance of a software development team and substitutes hundreds of hours of imprecise human work with reliable and objective: estimations, alerts and benchmarks.

Lateral uses machine learning to solve the discoverability problem, and it helps companies leverage their internal knowledge, as well as high-value external sources so that employees can easily find the relevant expertise and documents they require to complete their work.

Text & Document

Ambiverse develops technologies to understand, analyse, and manage Big Text collections automatically. Ambiverse is built on years of state-of-the-art research in text analytics.

Leverton develops and applies disruptive deep learning technologies to extract, structure and manage data from corporate documents in more than 20 languages. Their platform empowers corporations and investors to be more efficient and effective with their data and document management.

SearchInk is a machine intelligence company that unlocks knowledge and unleashes human resources. Based in Berlin, it serves enterprise customers to extract structured data from arbitrary documents.

Smacc is software that digitises and automates accounting and associated financial processes. It provides companies with constant, up-to-date access to business management data and analyses. Searching for receipts is also a thing of the past. Open interfaces allow integration into existing ERP and accounting systems such as SAP or Xero.

synergist.io is used by procurement departments, commercial teams and alternative legal service providers to execute high-volumes of recurring contracts with minimal effort.

Artificial Intelligence is about to find its way into all areas of modern society. Up to now, however, the focus has been on areas of application that are less common, such as medicine, research and high technology. A short time later, the world of private entertainment and mobility followed: intelligent assistants in smartphones and cars as well as in consumer electronics and the web. Now AI is also penetrating into an area that is an integral part of everyday life outside the home: daily shopping.

The Amazon company, known primarily as an online wholesaler, is launching the concept of an intelligent supermarket. The company opened its first Amazon Go Supermarket in Seattle, Washington, in January 2018. The aim here is to offer customers everyday shopping, especially of foodstuffs, in a new, simplified form: no queuing at the checkout, no scanning of barcodes, no employees. The customer enters the shop, takes goods from the shelves, inserts them and leaves the store, ready. The amount due is debited from the customer's Amazon account, and the products withdrawn are deducted from stock in inventory accounting.

The Amazon company, known primarily as an online wholesaler, is launching the concept of an intelligent supermarket. The company opened its first Amazon Go Supermarket in Seattle, Washington, in January 2018. The aim here is to offer customers everyday shopping, especially of foodstuffs, in a new, simplified form: no queuing at the checkout, no scanning of barcodes, no employees. The customer enters the shop, takes goods from the shelves, inserts them and leaves the store, ready. The amount due is debited from the customer's Amazon account, and the products withdrawn are deducted from stock in inventory accounting.

What sounds so simple, requires some intelligent technology in the background and shopping doesn't work without any prerequisites: Customers need the (free) Amazon Go-App and log in when entering the shop by having the system scan a QR-Code from their smartphone. After that, however, the phone can be plugged in safely, because from that point and on the Artificial Intelligence of charging with its learning algorithm takes over the process. While the system continuously monitors them via camera and personal identification, customers take the goods from the shelves, which in turn act as scales. The withdrawn number of pieces of a product is credited to the customer in the virtual shopping cart; goods are deleted. When the purchase is finished, the AI registers it when the customer leaves the shop and debits his Amazon account shortly after that with the amount due.

The decisive technological progress in this respect is undoubtedly the naturalness with which the transfer of goods and money takes place. Customers do not have to scan in commodities, and the purchase is made under constant visual and sensory control of the AI, without trust in the honesty of the customers. But Amazon's intelligent supermarket cannot do without personnel: Employees provide support for the supply of goods on the shelves, prepare ready-to-eat food and supervise the sale of alcohol to comply with legal requirements. Amazon's statements on the question of whether the new concept is a feasibility study or the prototype of an entire chain of stores is also unclear. Generally speaking, Amazon's advance may be seen as part of a development that is increasingly changing the way the food and retail trade functions and looks: Gradually, cash registers and staff disappear from the shops, and the supermarket becomes - according to the vision - an intelligent autonomous system.

For some time now, machine learning and Artificial neural networks have been widely discussed and are highly exciting topics of current research. Google has recently succeeded in creating Artificial Intelligence (AI) that can produce its own "children" and they are more reliable and precise than all comparable human-made AIs.

What is Machine Learning?

What is Machine Learning?

The definition of machine learning is described as following: learning i. e. generating knowledge from experience. However, understanding is gained automatically by a computer system. By automating data analysis, large amounts of data for pattern recognition can be processed quickly - much faster and more accurately than a human being could.

The AutoML project

By applying this approach, Google has now created its own Artificial Intelligence technique that can generate children. AI AutoML proposes specific software architectures and algorithms for its AI children. These are then tested and improved by the test results. To achieve this the methodology of Reinforcement Learning is used. This technique means that the AI draws up a plan of action or strategy without any human input and adapts it using appropriate positive and negative feedback. Together with the iterative improvement through several cycles, AI children are getting better and better at performing their tasks.

Tasks of the AI

The goal of the Google supercomputer is to detect objects such as humans, animals or cars in a video. NASNet, as the AI child is called, is very successful: it can correctly capture 82.7% of the objects in the videos it is shown. This makes it better than all AIs implemented by humans under similar conditions.

The automatic recognition of objects, which has succeeded in doing so, is of interest to many industries. The systems produced in this way are not only more precise, but they can also be much more complicated. First applications can be found in self-propelled cars, for example. And other companies will also benefit from being able to develop AIs for demanding tasks such as operations more quickly and accurately.

The ethical aspect

Despite the promising prospects for the future, large companies that are currently researching the field of Artificial Intelligence have to deal with ethical issues. For example, what happens if AutoML generates AI children too fast than society can follow suit? Or what happens if the AI develops a life of its own at some point? This is why the responsible generation of Artificial Intelligence is one of the primary drivers of research, including Amazon and Facebook in addition to Google.

The Digital Demo Day in Düsseldorf was a very successful day!

The Digital Demo Day in Düsseldorf was a very successful day!

We had many interesting conversations at our stand where we showed examples of visual computing with AI and our AI-Cam before and especially after our presentation. It was our pleasure to welcome at our booth many new contacts but also to meet some friends from our network.

Our special thanks go to Klemens Gaida and his team for organising this great event!

In recent years, the rapid development of Artificial Intelligence has reached new levels that once were considered pure science fiction. With particular attention - and from different perspectives also with unusually high fears - all novelties are viewed, a fact that points in the direction of machine consciousness. Especially in the area of perception and its manipulation, the performance of machines is currently astounding to an increasing extent, especially for amateurs and even experts.

A good example is the work of an AI research group in the development department of Nvidia, a company known primarily as a manufacturer of graphics cards. The researchers succeeded in teaching Artificial neural network to change these media credibly, starting with image and film sources, about specific characteristics. With this technology, it is possible to change the weather in a video or the breed of a dog on a picture. The crucial point here is that representations in image or video which can be manipulated almost arbitrarily without a human editor having to intervene. The results are not yet perfect, but they are likely to be even more convincing in the future.

A good example is the work of an AI research group in the development department of Nvidia, a company known primarily as a manufacturer of graphics cards. The researchers succeeded in teaching Artificial neural network to change these media credibly, starting with image and film sources, about specific characteristics. With this technology, it is possible to change the weather in a video or the breed of a dog on a picture. The crucial point here is that representations in image or video which can be manipulated almost arbitrarily without a human editor having to intervene. The results are not yet perfect, but they are likely to be even more convincing in the future.

Scientists from the University of Kyoto went one step further: they used a similar procedure to allow Artificial Intelligence to recognise the mental perceptual images in the human brain so that the AI reads a person's thoughts to some extent. This happens in detail: A neuronal network is trained to match images that a human subject looks at with data obtained by functional magnetic resonance imaging (FMRI) of the person's corresponding brain activity. In this way, the AI learns to associate external stimuli (pictures) with the internal states in the brain (the MRI patterns). If, after this learning phase, it receives only MRI data as input, it can reconstruct what people perceive from this information without first having taken knowledge of these images. The images of these spiritual processes produced by the AI are anything but photorealistic. However, they do show the original image.

A question as threatening as can Artificial Intelligence reads your thoughts should not cause so much discomfort if you take a closer look. The actual "reading of thoughts", the look into the brain, continues to be taken over by MRI, and that's what it is meant for. Artificial intelligence, on the other hand, is limited to pattern recognition through a neural network and the application of what has been learned to new data. The strength of neural networks lies in the speed of work: while people need hours to learn new lessons, such a system can do millions of learning processes at the same time. A large number of passages creates a very differentiated system of weightings and states between the neurons in the net so that the result of continuous training becomes more and more similar to the model. The possible applications are manifold, but above all, in one respect this technology offers fascinating promises for the future: It could enable people who cannot communicate in language or writing to communicate their thoughts and inner images. Further applications are also conceivable, such as the direct "uploading" of intellectual content into computer networks.

A question as threatening as can Artificial Intelligence reads your thoughts should not cause so much discomfort if you take a closer look. The actual "reading of thoughts", the look into the brain, continues to be taken over by MRI, and that's what it is meant for. Artificial intelligence, on the other hand, is limited to pattern recognition through a neural network and the application of what has been learned to new data. The strength of neural networks lies in the speed of work: while people need hours to learn new lessons, such a system can do millions of learning processes at the same time. A large number of passages creates a very differentiated system of weightings and states between the neurons in the net so that the result of continuous training becomes more and more similar to the model. The possible applications are manifold, but above all, in one respect this technology offers fascinating promises for the future: It could enable people who cannot communicate in language or writing to communicate their thoughts and inner images. Further applications are also conceivable, such as the direct "uploading" of intellectual content into computer networks.

The Digital Demo Day is just around the corner, and we are pleased to be part of it! On 1rst of February, we will be presenting our digital solutions and innovative AI technologies at the Digital Hub in Düsseldorf.

Join us at the Digital Demo Day to see the latest digital trends, talk about applications and get profound insights.

Tickets can be purchased here

See you there!

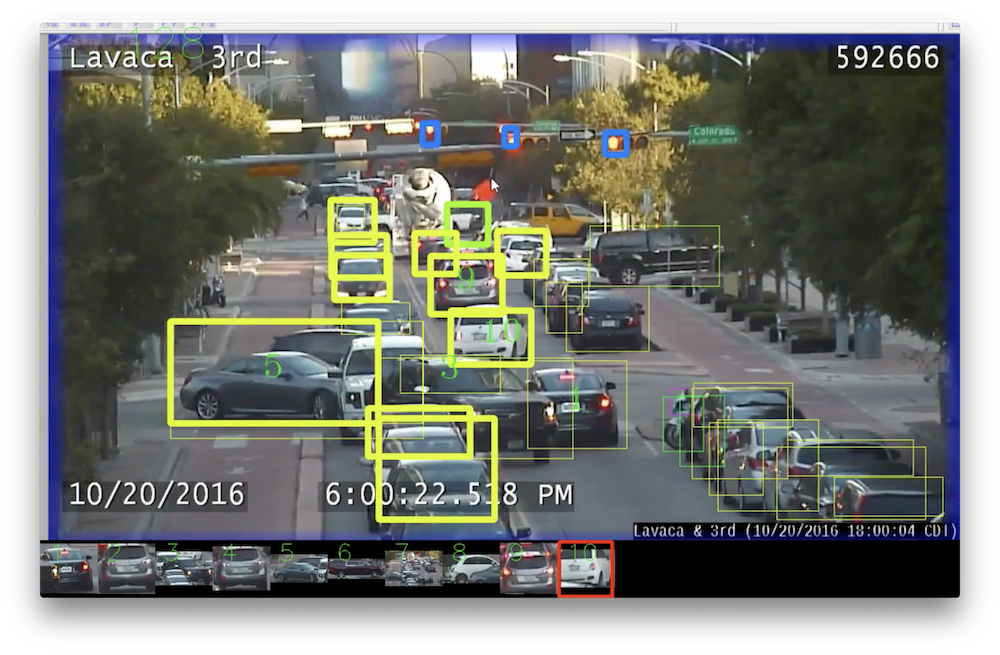

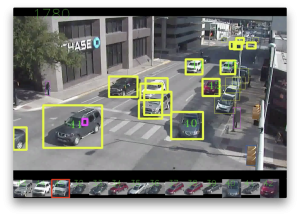

Cameras at intersections and other busy roads are not new. They enable traffic monitoring and provide images in case of accidents. In the future, the camera shots could be even more valuable - with the help of Artificial Intelligence. The evaluation of traffic data by supercomputers could soon ensure that roads will become safer. By evaluating data and analysing traffic in a short time. Scientists from the Texas Advanced Computing Center (TACC), the Center for Transportation Research at the University of Texas and the Texan city of Austin are working on programs that use deep learning and data mining to make roads safer and eliminate traffic problems.

The scientists are developing Artificial Intelligence that uses deep learning to evaluate video recordings of traffic points. This software should be able to recognise and classify objects correctly. The objects are cars, buses, trucks, motorcycles, traffic lights and people. The software determines how these objects move and behave. In this way, information can be gathered that can be analysed more precisely to prevent traffic problems. The aim is to develop software to help transport researchers evaluate data. The Artificial Intelligence should be flexible in its use and be able to recognise traffic problems of all kinds in the future without anyone having to program them explicitly for this purpose.

The scientists are developing Artificial Intelligence that uses deep learning to evaluate video recordings of traffic points. This software should be able to recognise and classify objects correctly. The objects are cars, buses, trucks, motorcycles, traffic lights and people. The software determines how these objects move and behave. In this way, information can be gathered that can be analysed more precisely to prevent traffic problems. The aim is to develop software to help transport researchers evaluate data. The Artificial Intelligence should be flexible in its use and be able to recognise traffic problems of all kinds in the future without anyone having to program them explicitly for this purpose.

Thanks to deep learning, supercomputers classify the objects correctly and estimate the relationship between the detected objects in road traffic by following the movements of cars, people, etc. After this work was done, the scientists gave the software two tasks: Count the number of vehicles driving along a road. And more difficult: Record near collisions between cars and pedestrians. The Artificial Intelligence scored 10 minutes of video footage and counted all vehicles with 95% security. Being able to measure traffic accurately is a valuable skill of the supercomputer. At present, numerous expensive sensors are still needed to obtain such data, or studies must be carried out which would only produce specific data. The software, on the other hand, can monitor the volume of traffic over an extended period and thus provide figures on traffic volumes that are far more accurate. This procedure makes it possible to make better decisions on the design of road transport.

In the case of near collisions, the Artificial Intelligence enabled scientists to identify situations where pedestrians and vehicles were approaching threateningly automatically. Thus, it possible to determine points of traffic that are particularly dangerous before accidents happen. The data analysed could prove very revealing when it comes to eliminating future traffic problems.

The next project: The software will learn where pedestrians cross the road, how drivers react to signs pointing out pedestrians crossing the street, and how far they are willing to walk to reach the pedestrian path. The project by the Texas Advanced Computing Center and the University of Texas illustrates how deep learning can help reduce the cost of analysing video material.

I initiated and helped to organise the already famous Handelsblatt AI Conference, which will take place in Munich on March 15th and 16th.

We were able to set up a great line of speakers and I am really looking forward to discussing the latest developments in AI with Damian Borth, Annika Schröder, Klaus Bauer, Norbert Gaus, Bernd Heinrichs, Andreas Klug, Dietmar Harhoff, Alexander Löser, Gesa Schöning, Oliver Gluth, Reiner Kraft, Thomas Jarzombek and Daniel Saaristo.

We are very proud of having Jürgen Schmidhuber, one of the godfathers of AI and the inventor of LSTM.

Join us in getting profound insights and having exciting conversations.

See you in Munich!

Joerg Bienert

Bot better than a human for the first time

The internationally competing AI developers had developed their specific programs that can read and compete in a squad test. In doing so, they demonstrated how the development of learning and understanding the language of machines is organised. Almost all global technology companies, including Google, Facebook, IBM, and Microsoft, use the prestigious Stanford Question Answering Dataset reading and understanding test to measure themselves against each other and an average human subject. The squad has more than 100,000 questions related to the content of over 500 Wikipedia articles. Squad asks questions with objectifiable answers, such as "What causes rain?". Other questions that needed to be answered included: "What nationality did Nikola Tesla have?" Or "What is the size of the rainforest?" Or "Which musical group performed in the Super Bowl 50 Halftime Show?" Result: The bot persists. And not only that but for the first time, it was better than humans.

Machine learning development

Machine learning development

The conventional first AI reading machine that has done better on the "squad" than a human being is the new software of Alibaba Group Holding Ltd., which was developed in Hangzhou, China, by the company's Institute of Data Science and Technologies. The research department of the Chinese e-commerce giant said that the machine language processing program achieved a test score of 82.44 points. It has cracked the previous record of 82.30 points, which was still held by a human. Chinese e-commerce giant Alibaba continues to announce its leadership role in the development of machine learning and Artificial Intelligence technologies. However, Microsoft Alibaba is hot on the heels. Microsoft's reading machine was only just exceeded by the test result of the Alibaba program.

What makes bots better

It is necessary that the bot program can accurately filter a variety of available information to give correct and only relevant answers. The program, which maps the human brain as a neural network model, works through paragraphs to sentences and from there to words, trying to identify sections that might contain the answer you're looking for.

The head of development for AI and language programs, Si Luo, said the findings make it clear that machines can now answer objective questions with high accuracy.

This development opens up new possibilities for Artificial Intelligence and its use in customer service. As reading robots could in the future, for example, also answer medical inquiries via the Internet, this would significantly reduce the need for human input. Alibaba's bot program called "Xiaomi" relies on the capabilities of the reading AI engine and has been used successfully. At the so-called Single's Day, the annually recurring, most significant shopping event of Asia on November 11, the software was successfully applied.

AI further ahead of an existing challenge

AI further ahead of an existing challenge

However, bots still have difficulties with language queries that are vague or colloquial, ironic or just grammatically incorrect. If there are no prepared answers, it is not possible for the robot program to find a suitable solution when reading. Then it does not respond adequately and answers incorrectly.

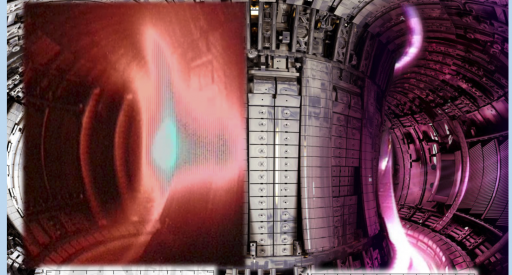

The fusion of atomic nuclei could become one of the solutions to future energy problems and may represent a significant advance in the technological development of humanity. Fusion reactions are still difficult to control, potentially damaging the so-called tokamaks, the fusion reactors that generate energy from plasma using magnetic fields. Disruptions of the results can occur at any time, which interrupt the fusion process. Artificial intelligence can help to anticipate and react correctly to these disturbances so that the damage is minimised and the process runs as smoothly as possible. Scientists are in the process of developing computer programs that enable them to predict the behaviour of plasma.

Scientists from Princeton University and the U. S. Department of Energy's Princeton Plasma Physics Laboratory are conducting initial experiments with Artificial Intelligence to test the software's ability to predict. The group is led by William Tang, a renowned physicist and professor at Princeton University. He and his team develop the code for ITER, the "International Thermonuclear Experimental Reactor" in France. They aim to demonstrate the applicability of Artificial Intelligence in this area of science. The software is called "Fusion Recurrent Neural Network", FRNN for short, and uses a form of deep learning. This is an improved variant of machine learning that can process considerably more data. FRNN is particularly good at evaluating sequential data with great patterns. The team is the first to use a Deep Learning program to predict the complexities of fusion reactions.

This approach allowed the team to make more accurate predictions than before. So far, the experts have tried their hand at the Joint European Torus in Great Britain, the most massive Tokamak in operation. Soon ITER will face up to it. For ITER, the development of the Artificial Intelligence of the Fusion Recurrent Neural Network should be so advanced that it can make up to 95% accurate predictions when incidents occur. At the same time, the program should give fewer than 3% false alarms. The Deep Learning Program is powered by GPUs,"Graphic Processing Units", unlike lower-performance CPUs. These make it possible to run thousands of programs at the same time. A demanding work for the hardware, which has to be distributed to different GPUs. The Oak Ridge Leadership Computing Facility, currently the fastest supercomputer in the United States, is used for this purpose.

Initially, the first experiments were carried out on Princeton University computers, where it turned out that the Artificial Intelligence of the FRNN is perfectly capable of processing the vast amounts of data and making useful predictions. In this way, Artificial Intelligence provides a valuable service to science by predicting the behaviour of plasma with pinpoint accuracy. FRNNN will soon be used by Tokamaks all over the world and will make an essential contribution to the progress of humanity.